Open Source HPC Benchmarking

Andy Turner, EPCC

30 Oct 2018

a.turner@epcc.ed.ac.uk

Slide content is available under under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

This means you are free to copy and redistribute the material and adapt

and build on the material under the following terms: You must give appropriate credit, provide

a link to the license and indicate if changes were made. If you adapt or build on the material

you must distribute your work under the same license as the original.

Note that this presentation contains images owned by others. Please seek their permission

before reusing these images.

Built using reveal.js

reveal.js is available under the MIT licence

Overview

- Introduction

- Open source benchmarking

- Benchmarking results

- Live demo

- Next steps

Introduction

Why benchmarking now?

- Lots of different HPC systems available to UK researchers

- ARCHER: UK National Supercomputing Service

- DiRAC: Astronomy and Particle Physics National HPC Service

- National Tier2 HPC Services

- PRACE: pan-European HPC facilities

- Commercial cloud providers

- A diversity of architectures available (or coming soon):

- Intel Xeon CPU

- NVidia GPU

- Arm64 CPU

- AMD EPYC CPU

- …(variety of interconnects and I/O systems)

Audience: users and service personnel

- Give researchers information required to choose best service for their research

- Allow service staff to understand their service performance and help plan procurements

Benchmarks should aim to test full software with realistic use cases

Initial approach

- Use software in the same way as a researcher would:

- Use already installed versions if possible

- Compile sensibly for performance but do not extensively optimise

- May use different versions of software on different platforms (but try to use newest version available)

- Additional synthetic benchmarks to test I/O performance

Open source benchmarking

https://xkcd.com/225/

https://xkcd.com/225/

What is open source benchmarking?

- Full output data from benchmark runs are freely available

- Full information on compilation (if performed) freely available

- Full information on how benchmarks are run are freely available

- Input data for benchmarks are freely available

- Source for all analysis programs are freely available

Problems with benchmarking studies

Benchmarking is about quantitative comparison

Most benchmarking studies do not lend themselves to quantitative comparison

- Do not publish raw results, only processed data

- Do not publish details of how data was processed in suffcient detail

- Do not provide input datasets and job submission scripts

- Do not provide details of the how software was compiled

Benefits of open source approach

- Allows proper comparison with other studies

- Data can reused (in different ways) by other people

- Easy to share and collaborate with others

- Verification and checking - people can check your approach and analysis

Results

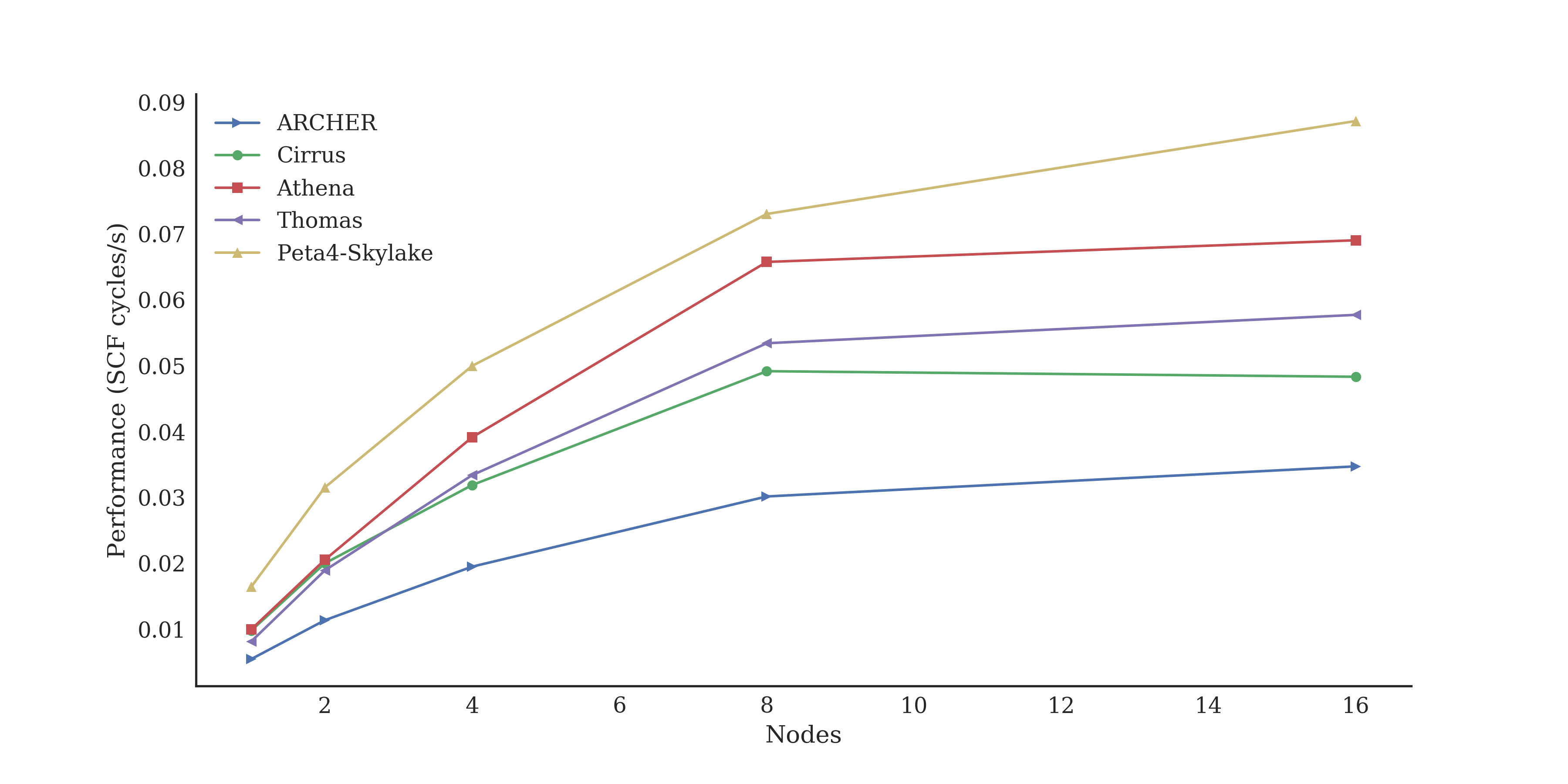

Performance plots

- Neither runtime nor speedup are ideal quanties to plot to compare performace:

- Runtime makes it difficult to interpret performance change as node count increases

- Speedup does not show differences in absolute performamce

- Essentially the reciprocal of the runtime

- Units usually dependent on software, e.g. ns/day, iter/s, years/day

Multinode performance: CASTEP

Single node performance: GROMACS

| System | Architecture | Performance (ns/day) | cf. ARCHER |

|---|---|---|---|

| ARCHER | 2x Intel Xeon E5-2697v2 (12 core) | 1.216 | 1.000 |

| Cirrus | 2x Intel Xeon E5-2695v4 (18 core) | 1.699 | 1.397 |

| Tesseract | 2x Intel Xeon Silver 4116 (12 core) | 1.216 | 1.088 |

| Peta4-Skylake | 2x Intel Xeon Gold 6142 (16 core) | 2.082 | 1.712 |

| Isambard | 2x Arm Cavium ThunderX2 (32 core) | 1.471 | 1.201 |

| Wilkes2-GPU | 4x NVidia V100 (PCIe) | 2.774 | 2.257 |

| JADE | 4x NVidia V100 (DGX1, NVlink) | 1.469 | 1.208 |

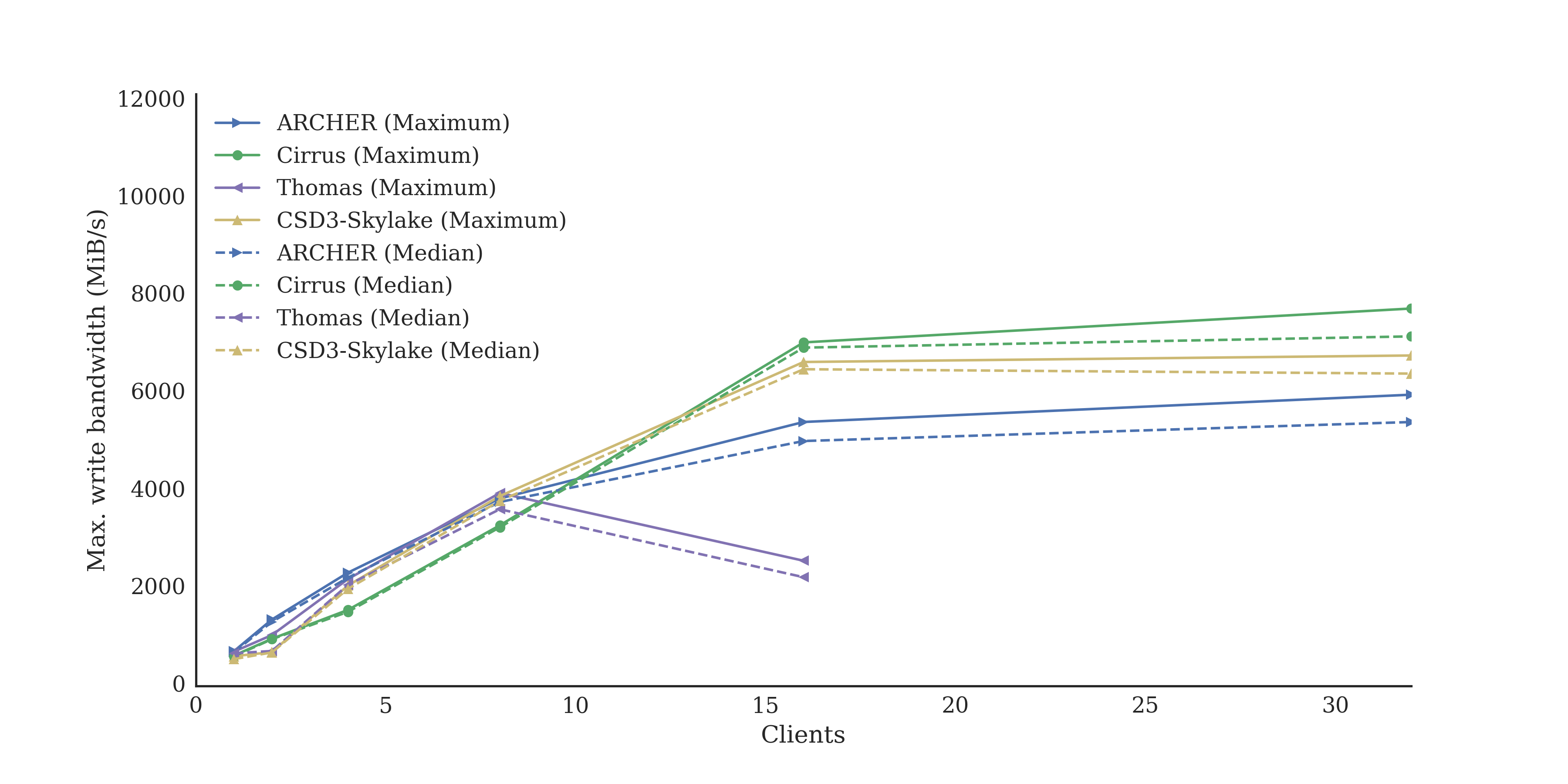

I/O parallel write bandwidth: benchio

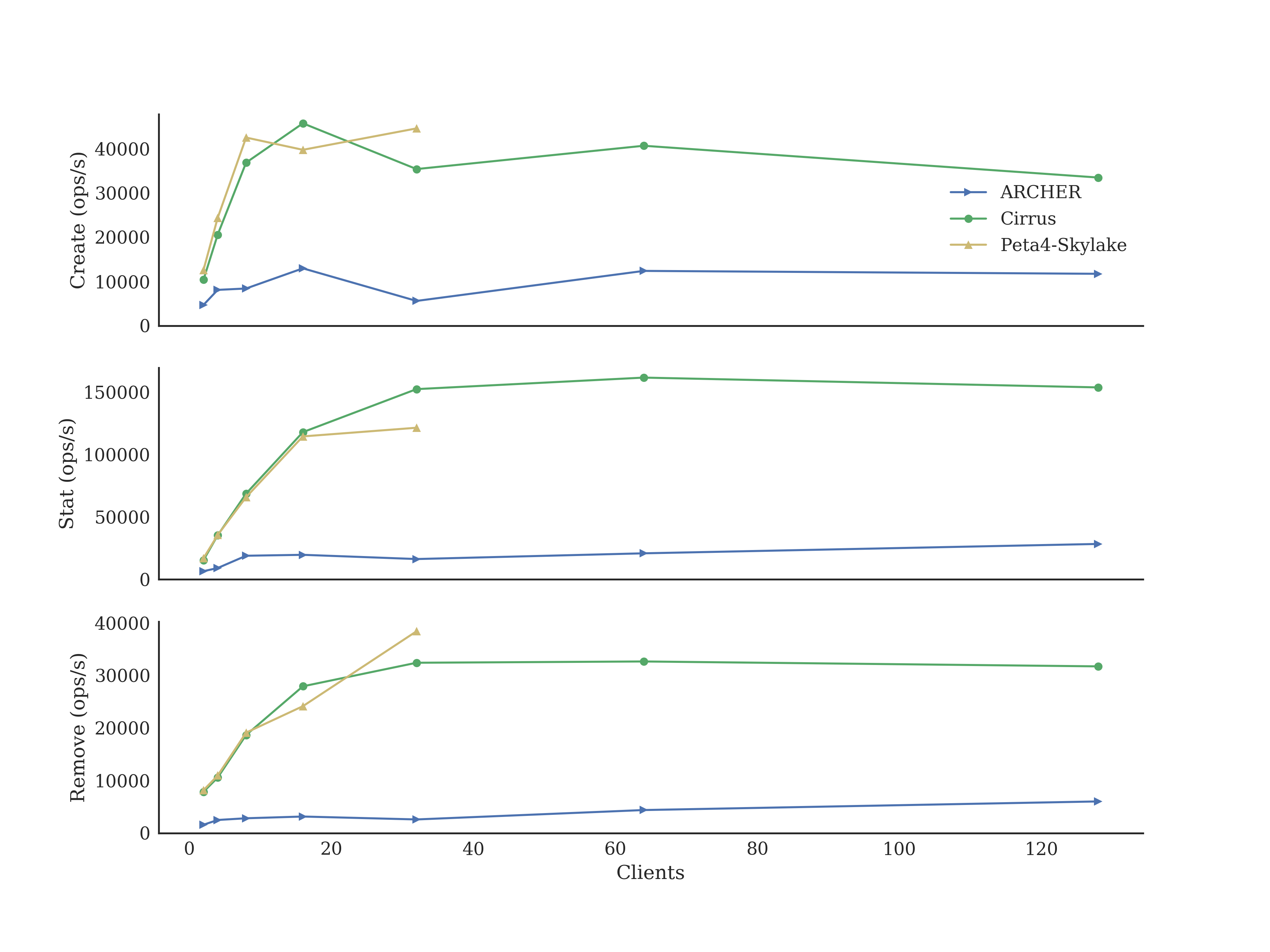

I/O parallel MDS performance: mdtest

Live demo!!

Next steps

- Write a report on single node performance comparisons

- Run multi-node Arm processor tests as soon as systems are available

- Run benchmarks on commercial cloud offerings

- Include ML/DL benchmarks in set

- Perform performance analysis on existing benchmarks and add to repository

Campaigning for the recognition of the RSE role, creating a community of RSE's and organising events for RSE's to meet, exchange knowledge and collaborate.

Join the community!

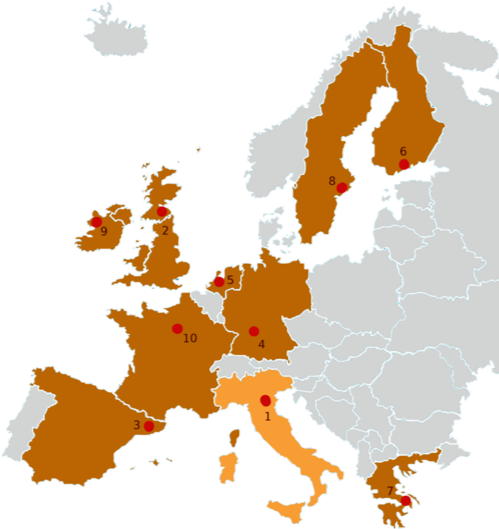

HPC Europa 3: Transnational access programme

EC-funded collaborative research visits using HPC

- Applicants can be any level: masters to professors

- Visit duration is from 2 to 13 weeks

- Funding for travel and accommodation / living expenses

- Includes access to world-class HPC facilities

- Training and support provided

- Researchers in UK can be visitors or hosts

- Easy application procedure: 4 closing dates per year – apply any time

Information on facilities and how to access them

Open Source, community-developed resource